Can AI help doctors save lives?

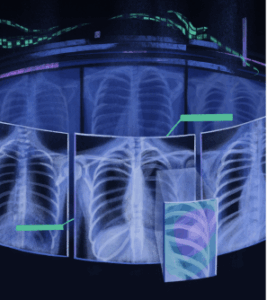

A team at Arizona State University (ASU) believes the answer is yes, and they’ve built something to prove it. Researchers led by Jianming “Jimmy” Liang at Arizona State University’s College of Health Solutions have developed Ark+, a powerful new AI tool designed to help doctors read Chest X-rays more accurately and quickly. It can detect everything from common lung infections to emerging threats like COVID-19 and avian flu, and it’s already outperforming tools developed by tech giants like Google and Microsoft.

“Ark+ is meant to reduce diagnostic mistakes, speed up the process, and make high-quality AI tools available to everyone, no matter where they are,” said Liang.

Why Chest X-Ray Matters

Chest X-rays are one of the most widely used diagnostic tools in medicine. They’re used to detect pneumonia, tuberculosis, Valley fever, heart problems, broken ribs, and even some abdominal conditions. But interpreting them correctly takes skill, and sometimes, even experienced doctors can miss subtle signs.

That’s where Ark+ comes in. It was b

uilt to help doctors catch what the eye might overlook, especially in rare or newly emerging diseases.

What Makes Ark+ Different?

The key isn’t just the AI, it’s how the AI learns.

Most AI tools are trained only on images, using basic labels like “normal” or “abnormal.” Ark+ was trained differently. Liang’s team used more than 700,000 Chest X‑Rays alongside doctors’ written reports. This combination of visuals and expert interpretation gave Ark+ a deeper understanding of what different conditions look like in real-world patients.

“You learn more when you learn from experts,” Liang said. “And we wanted the AI to do the same.”

While companies like Google and Microsoft rely on faster, less detailed self-supervised learning, the ASU team took a slower but more rigorous approach: fully supervised learning. That decision paid off; Ark+ consistently beat out more expensive, proprietary systems in performance tests.

A Small Team with a Big Impact

A small team, including grad students DongAo Ma and Jiaxuan Pang, built the Ark+ project in collaboration with Mayo Clinic Arizona radiologist Dr. Michael Gotway. Funding was provided by the NIH, NSF, and other partners.

Despite its limited resources, the team chose to go open-source. That means hospitals and clinics around the world can download, use, and even improve the system for free.

“If we tried to compete directly with a big tech company, we probably wouldn’t win,” Liang said. “But with open-source software, we can collaborate with labs and hospitals around the world. Together, we’re stronger than any one company.”

What Ark+ Can Do

- Trained on global data: Ark+ learnt from chest X-rays taken around the world, giving it a wide range of experience.

- Catches rare cases: It can recognize uncommon diseases even with limited examples.

- Adaptable and efficient: It can adjust to new conditions without requiring full retraining.

- Fair and resilient: Ark+ is designed to perform well even when data is limited or skewed.

- Secure: It protects patient privacy and can be used without sending data to the cloud.

- Open and customizable: Researchers can download the code and models, then fine-tune them for their own needs.

Beyond X-Rays

Ark+ is just the beginning. Liang’s team is now working on ways to apply the same approach to other types of medical imaging, like CT scans and MRIs. They aim to build safer AI tools usable in all hospitals, not just those with costly systems.

“We want AI in medicine to be smarter, safer, and fairer,” Liang said. “By sharing our work, we’re giving others the tools to build on it and hopefully save more lives.”

In a healthcare system where costs are high and outcomes often lag, Ark+ offers something rare: a free, open, and effective tool that gives doctors a real edge and patients a better chance.